Why AI Agents Are Replacing SaaS Dashboards in 2026

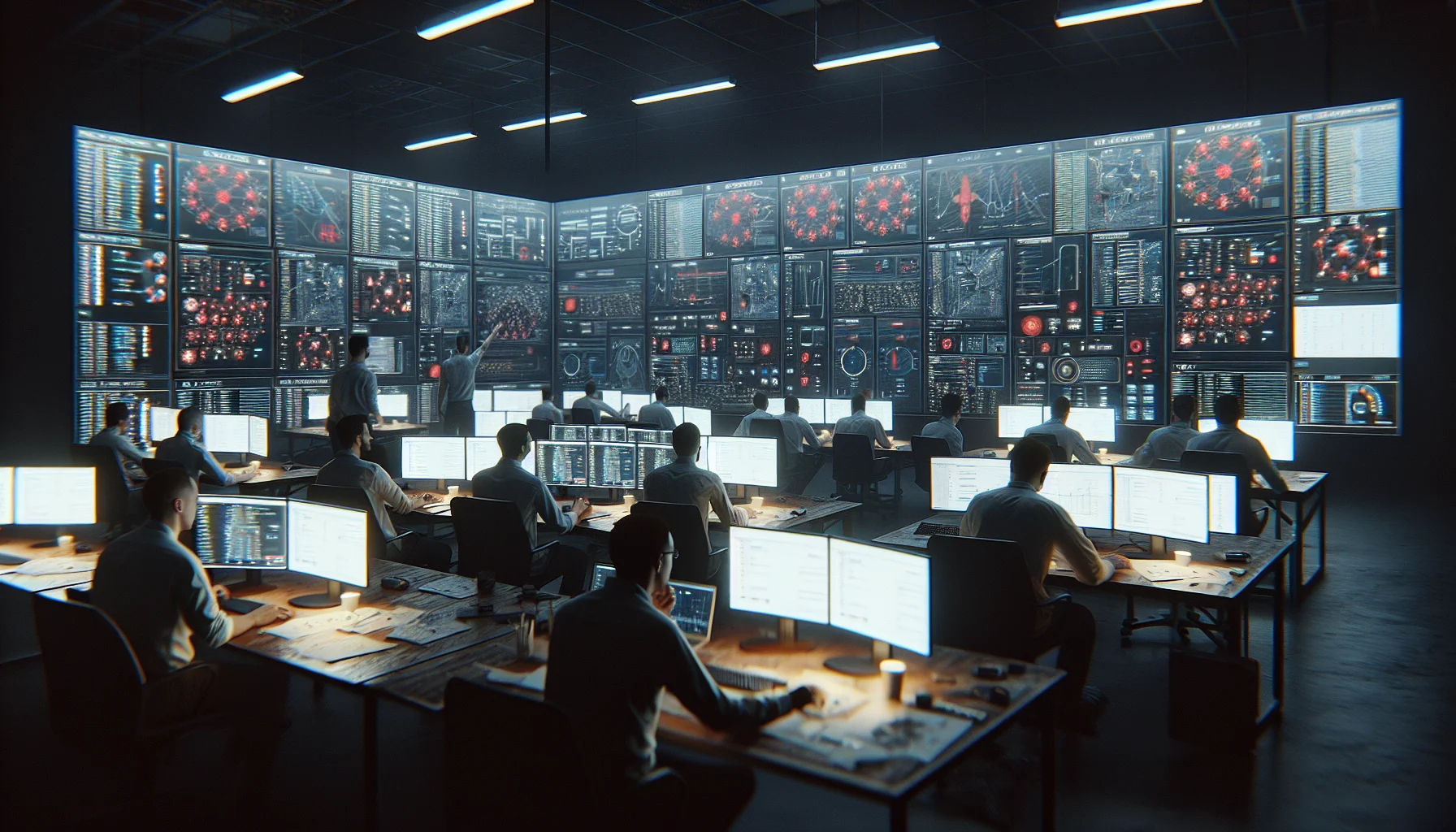

Enterprise teams are ditching traditional SaaS dashboards for autonomous AI agents that monitor, decide, and act. Here's what's driving the shift and what it means for software builders.

The Dashboard Era Is Ending

For the past fifteen years, enterprise software meant dashboards. Rows of charts, filterable tables, notification badges. Teams logged in, scanned metrics, made decisions, and logged out. The software was a mirror — it showed you data and waited for you to act.

In 2026, that model is breaking down. The fastest-growing enterprise tools don't have dashboards at all. They have agents — autonomous AI systems that monitor data streams, identify anomalies, make recommendations, and in many cases take action without human intervention.

What Changed

Three converging trends made this possible:

- Context windows grew large enough to hold real business state. With models supporting 1M+ token contexts, an agent can ingest an entire quarter's worth of CRM data, support tickets, and revenue metrics in a single prompt.

- Tool use became reliable. Modern LLMs can call APIs, query databases, and trigger workflows with near-human accuracy. The "hallucination problem" hasn't disappeared, but structured tool calling has reduced errors to acceptable rates for many business tasks.

- The cost of inference dropped 100x. Running an agent that checks your pipeline every hour costs less than the SaaS subscription it replaces.

What Agent-First Software Looks Like

Consider a sales team using a traditional CRM. A rep logs in, filters deals by stage, notices a large opportunity has gone silent for two weeks, and writes a follow-up email. This sequence — observe, analyze, decide, act — takes 15-30 minutes and happens only when the rep remembers to check.

An agent-first CRM handles this differently. The agent continuously monitors deal activity. When it detects a stalled opportunity, it drafts a context-aware follow-up email, personalizes it based on the prospect's recent LinkedIn activity and company news, and either sends it or surfaces it for one-click approval. The rep's morning standup is replaced by a brief chat with the agent: "What needs my attention today?"

The Builder's Perspective

For software engineers, this shift changes what we build:

- Less UI, more orchestration. The frontend shrinks from complex SPAs to simple chat interfaces and approval flows.

- Event-driven architectures become essential. Agents need to react to changes in real-time, not poll dashboards.

- Evaluation replaces QA. Testing an agent means building evaluation harnesses that measure decision quality over thousands of scenarios.

- Guardrails are the new feature. The most valuable code in an agent system is often the constraints — what the agent is not allowed to do.

What This Doesn't Replace

Not everything benefits from agents. Exploratory data analysis, creative design, strategic planning — tasks that require open-ended human judgment — still need rich interfaces. The dashboard isn't dying everywhere. It's dying in the places where the human was acting like an agent anyway: scanning data, applying rules, executing routine actions.

Looking Ahead

The companies that win in 2026 won't be the ones with the best dashboards. They'll be the ones whose agents make the best decisions. For builders, the question isn't whether to add AI to your product — it's whether your product should be an agent from the ground up.

References & Citations

- Gartner (2026). "Hype Cycle for Artificial Intelligence, 2026." Gartner Research.

- Cognition Labs (2025). "The State of AI Agents in Enterprise." Technical Report.

- McKinsey Global Institute (2025). "The Economic Potential of Generative AI: The Next Productivity Frontier."

Related Posts

Understanding Retrieval-Augmented Generation: Architecture, Pitfalls, and Production Lessons

RAG is the most deployed LLM pattern in production today. After building RAG systems for 18 months, here are the architectural decisions that matter and the mistakes that don't show up until scale.

The Real Cost of Running LLMs in Production: A Breakdown

Token costs are just the tip of the iceberg. After running LLM workloads in production for a year, here's where the money actually goes — and how to cut costs without cutting quality.

Building Reliable AI Pipelines: Lessons from 50 Production Failures

AI systems fail differently than traditional software. After investigating 50 production incidents across ML systems, here are the patterns — and the engineering practices that prevent them.